Kafka is the open source event streaming platform of choice. It is a highly scalable way to stream data for integrations or analytics, but how can you use it in a low code Apex Designer project?

Publishing events to Kafka is simple, just add the Kafka Library to your project and add the Kafka Producer mixin to the business objects of interest. Watch the video to see it in action.

Transcript:

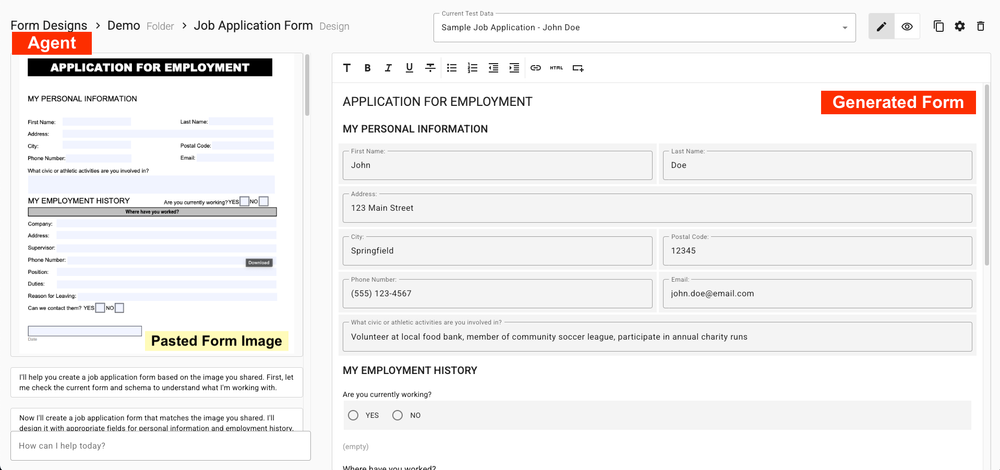

Here's a quick demonstration of the new Kafka Library and Kafka viewer in Apex Designer.

On the left, I have a simple Apex Designer app that has the Kafka library in it and the supplier business object set up with the Kafka producer mix in. On the right we have the Kafka viewer, which is connected to the same cluster and listening for messages.

So as we go in, we can create, for example, a new supplier here. And when we do that, you'll see that the messages have been published to the Kafka cluster on the right. And as we make changes or delete them, these messages are being published across.

Now let's take a look at how that was done. This is the Kafka Test app. So you can see it's got the Kafka library in it. And the supplier business object simply has the Kafka producer mixin on it. No configuration is needed for the default behavior.

And that's really all there is to it. We're also going to be working on a Kafka consumer that would create business objects in an Apex Designer app from Kafka messages that are coming in. Hope you enjoy it.